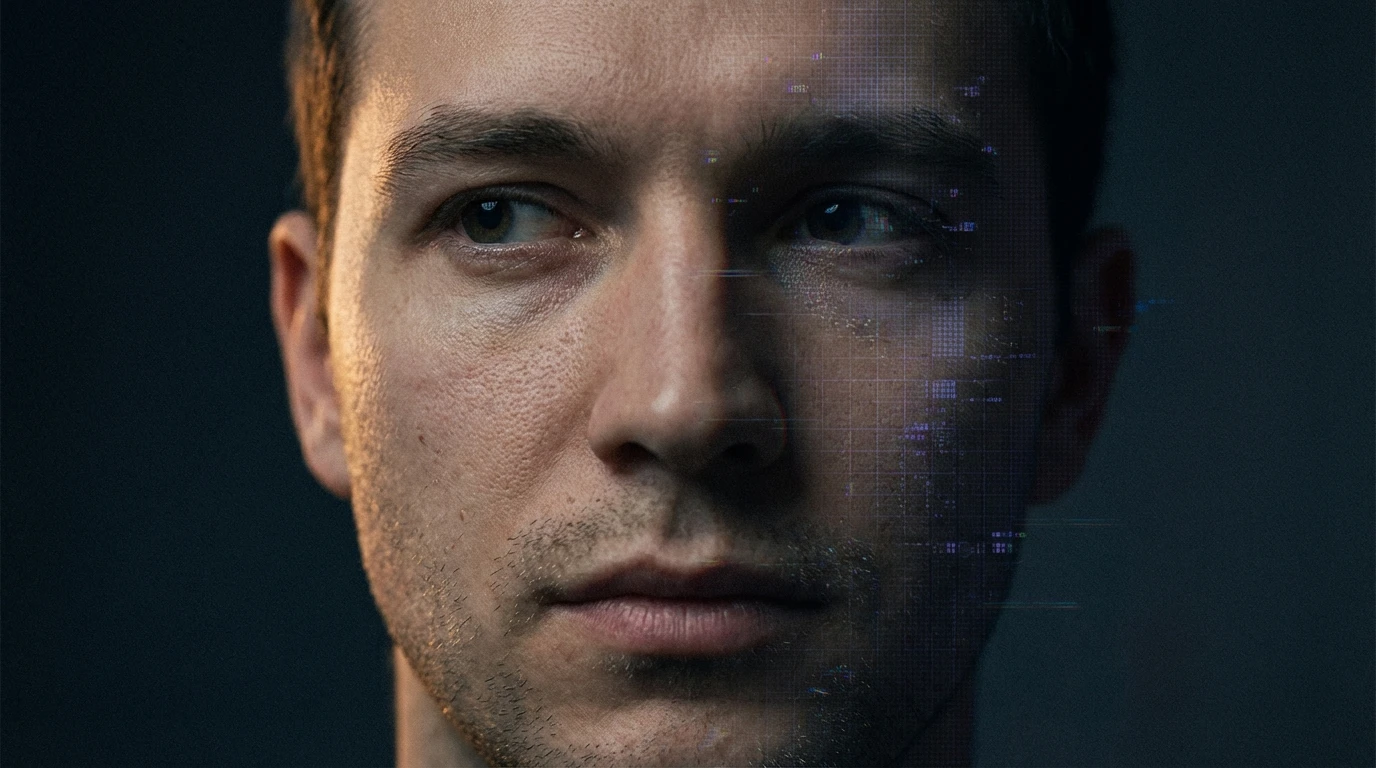

Deepfake and artificial intelligence in the work of the international detective agency PalmGroup

In recent months we have observed a sharp rise in cases where a key piece of evidence – a recording, photo, video or voice message – turns out to be a manipulation created with the help of artificial intelligence. Deepfake is no longer a rare phenomenon reserved for entertainment films. It is a real tool for fraud, blackmail and evidence fabrication in family, business and criminal cases.

At the international detective agency PalmGroup, verifying the authenticity of digital materials has become a daily part of our work – in Poland, Germany and across Europe.

What is a deepfake and why is it an evidentiary problem

A deepfake is audio or video material generated by artificial intelligence algorithms in such a way that it imitates a real person – their face, voice, speech patterns, even their facial expressions. A few years ago, creating a convincing deepfake required advanced technical knowledge and hundreds of hours of source recordings. Today, a few seconds of someone's voice from social media and publicly available AI tools are enough to generate:

• fake phone conversation recordings

• fabricated voice messages

• videos in which a person „says” or „does” things that never happened

• compromising photos placed in a falsified context

👉 For a detective this means one thing: every digital item presented as evidence must be verified before it enters the case file or the courtroom.

Types of AI manipulation we encounter in investigations

In PalmGroup's practice, we most often deal with five types of manipulation:

1. Voice cloning

Fraudsters impersonate a family member or company executive to extract a transfer or confidential information. „Evidence” recordings in divorce cases are also increasingly turning out to be synthetic.

2. Deepfake video

A film showing a partner in a compromising situation, intended to force a favourable divorce settlement. In reality, the face has been digitally overlaid onto an actor or another person.

3. Fake chat screenshots

Browser-generated screenshots of apps like WhatsApp or Messenger depicting conversations that never took place on real devices.

4. Manipulated documents and invoices

In business cases we increasingly encounter fabricated invoices, contracts and bank statements generated or modified by AI tools.

5. Synthetic identities

Completely fake people – with a generated face, LinkedIn profile and professional history – used for business, recruitment and romance fraud.

How PalmGroup verifies the authenticity of digital evidence

Verifying a digital item cannot be reduced to a single click. It is a multi-layered process that combines technical tools with classic detective work:

• analysis of file metadata (EXIF, edit history, source device)

• detection of compression artefacts and lighting inconsistencies

• audio spectrogram analysis for signs of synthesis

• comparison of facial expressions and mouth movement with reference recordings

• context verification – whether the person in question could really have been in that place at that time

• reverse image search and source material lookup

👉 AI technology alone is not enough. Only the combination of digital tools with field experience makes it possible to distinguish a genuine piece of evidence from a manipulation with a high degree of certainty.

Examples from detective practice

Fake recording in a divorce case

A client received an alleged recording of his partner with a „lover”. Spectrogram analysis revealed digital traces of voice synthesis – the recording had been generated from a few seconds of an Instagram video.

„CEO fraud”

An accountant at a Polish company received a call from the „CEO” ordering an urgent transfer. The voice sounded identical. Only our analysis confirmed that it was a copy generated from an earlier press interview.

Blackmail with photos

A public figure received a ransom demand together with compromising photos. The analysis showed that the face had been digitally overlaid onto a body taken from material available online.

What to do if you suspect you have fallen victim to manipulation

• do not give in to time pressure – fraudsters rely on panic

• secure the material in its original form, without modification or recompression

• do not contact the person who supposedly appears in the material yourself

• consult a professional detective before making legal or financial decisions

• in case of blackmail – report the matter to the police and keep the entire correspondence

👉 The earlier verification begins, the greater the chance of capturing traces that reveal the origin of the forgery.

Legal aspects of deepfake in Poland and Europe

In Poland and the countries of the European Union, work is under way on regulations concerning artificial intelligence. The AI Act imposes an obligation to label AI-generated material, and the criminal code provides for liability for document forgery and fraud. In civil cases – divorce, alimony or inheritance – a deepfake may be challenged as evidence, but only if the opposing party can prove its inauthenticity. That is why a thorough expert report and documentation of the verification process are today just as important as the evidentiary material itself.

Why AI verification is a new competence for detectives

Classic detective work relied on observation, documentation and witness interviews. Today, digital analysis joins this set. At the international detective agency PalmGroup, we invest in:

• specialised software for deepfake detection

• team training in digital analysis and multimedia forensics

• cooperation with experts in IT security and cybercrime

• integration of traditional investigative methods with AI tools

Summary

Deepfake and AI tools are changing the way evidence is created – and the way we evaluate it. It is a challenge, but also an opportunity for detectives who know how to combine operational experience with modern technology. At PalmGroup we treat every digital item with due care and subject it to thorough verification before it becomes the basis for any decision.

Discretion • Effectiveness • International experience

If you suspect you have fallen victim to digital manipulation or need professional verification of evidentiary material – contact PalmGroup. We look forward to hearing from you.